Auto Agentic Team

The Anatomy of a Failed Prompt: Why AI Gives You Bad Answers

Most people approach a large language model the same way they approach a search engine or a basic smart speaker. They type in a quick question, hit enter, and expect a perfect answer. When the output comes back as a generic, hallucinated, or unhelpful mess, the immediate reaction is usually to blame the machine.

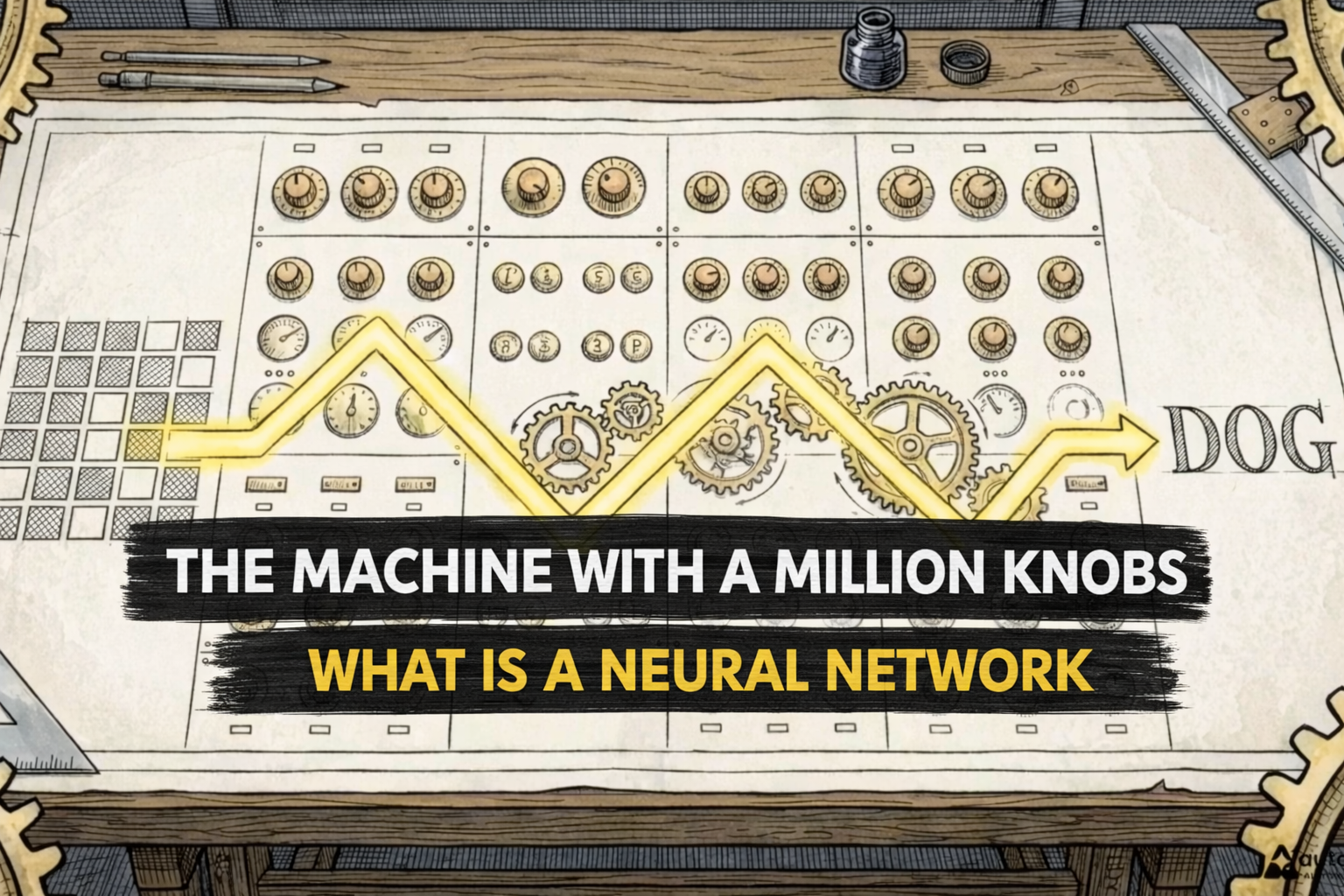

It is easy to assume the underlying model lacks the intelligence to understand the request. But prompts represent something far more structured than a search query. They function as a complete framework — a collection of instructions, boundaries, and specific context that directs the model's focus. The machine is failing because it lacks a human-provided framework.

The Zero-Shot Trap

To see how failure happens in practice, consider giving an AI a complex, multi-step business analysis problem. You want it to evaluate a quarterly sales report, identify growth opportunities, and assess potential risks. If you just paste the raw data and ask for an analysis, you are using a zero-shot prompt.

Zero-shot prompting means providing no examples or specific instructions, leaving the model to figure things out entirely on its own. The result is an immediate, disorganized failure. The generated text reads like a generic template. It invents speculative data points that aren't in the original report, and it completely lacks a logical structure to connect sales figures to actual business strategies.

You have a broken, useless output. So how do you build a systematic, human-driven feedback loop to rescue this text?

Step 1: Role-Based Prompting

The first step to fixing a failed prompt is giving the AI a specific identity to anchor its response. You need to tell it exactly who is analyzing this data. This technique — role-based prompting — involves explicitly instructing the AI to act as a particular professional. In this case, an expert financial advisor.

This guides the AI to draw on specific knowledge and communication styles associated with that profession. With the role assigned, the output immediately improves. The tone shifts from generic text to professional analysis, and the perspective aligns with someone evaluating financial risk and reward.

Step 2: Negative Prompting — Telling AI What NOT to Do

Assigning a role establishes the right context, but the resulting document is still dangerously cluttered. The AI is eager to play the part of a financial advisor, filling the page with dense, unfocused observations. It overcompensates, burying actual insights under heavy industry jargon and unnecessary tangents.

To clean this up, we use negative prompting — the practice of explicitly telling a generative model what to avoid producing, setting firm boundaries on its output. We update our instructions, specifically commanding the model to avoid making speculative claims about future quarters and to completely exclude industry jargon.

The second iteration yields a highly refined document. The tangents disappear, the vocabulary simplifies, and the analysis becomes clean. Telling an AI exactly what not to do is just as critical as telling it what to do.

Step 3: Why Few-Shot Prompting Can Backfire

The tone and format are now perfect, but a closer look at the text reveals a serious problem: the AI has miscalculated the projected growth margins, exposing a flaw in its core logic.

The logical next step might seem to be few-shot prompting — providing the AI with a few correctly solved examples within the prompt itself so it can learn the pattern. But for reasoning-heavy tasks, providing examples can actually backfire.

The AI replicates the exact visual structure of your provided examples but ends up inserting the wrong logic and mathematical variables into the new problem, degrading overall performance. Research shows that adding examples for complex puzzles often biases the model. Instead of engaging in deep reasoning, the model lazily copies the surface patterns of the examples provided.

Step 4: Chain-of-Thought Prompting

Mimicking examples is not enough for complex problem solving. We must force the AI to actively generate its own logical path from scratch.

Large language models gain a massive boost in accuracy through chain-of-thought prompting — instructing the model to think step by step, forcing the AI to output intermediate reasoning steps before its final answer. This space allows the model to verify its work, identify logical errors, and self-correct along the way.

Applying this exact process to our business analysis, the AI evaluates each sales figure sequentially. The math is corrected, the logic holds, and we receive a final output that proves this method forces the model to engage its underlying logic rather than just predicting the next word.

The CLEAR Framework

Stepping back from our sales report, we can look at the overall transformation we orchestrated. We took a chaotic, hallucinated output and carefully engineered it into a precise, expert-level document.

This entire iterative process can be standardized using the CLEAR framework:

- Concise — Keep instructions focused and specific

- Logical — Use step-by-step reasoning (chain of thought)

- Explicit — Assign clear roles and identities

- Adaptive — Apply negative constraints to steer output

- Reflective — Continuously iterate and refine

The Human Factor

Prompt engineering is never a one-off command. It is a continuous, reflective, and adaptive conversation between a human and a machine. Generative AI operates best as an extension of human thinking, rather than a total replacement for it.

Accessing a model's most complex capabilities requires incredibly rigorous, structured human direction. Raw computing power operates in a vacuum — it is useless without a human orchestrator to explicitly guide that power into a specific, useful structure.

Language models will continue to grow smarter and faster, but the ultimate limiting factor to their capability will always be the clarity of the instructions we give them.